Fixing Persistent Peer-Review Problems

Now that AI is forcing our hand

Recently, scott cunningham (here), Paul Goldsmith-Pinkham (here and here), and Brian Heseung Kim (here) have written articles about how AI is accelerating the research process and the headache that it will cause the peer review process. David Oks yesterday added a pessimistic perspective on how citation culture corrupted science and how AI is accelerating existing problems.

I wanted to use this post to review their critiques and proposals and also share some potential solutions as I’ve thought about their arguments. Along with these recent AI-centric critiques of the peer review process, I wanted to also throw it back to John H. Cochrane who wrote about the problems with peer review back in 2017 (a year before GPT-1 was released!) and whose recommendations for improvement have become all the more important as the pace of research accelerates.

What previous posts have suggested

To briefly summarize:

Goldsmith-Pinkham emphasizes that papers will need to be indexed differently so that they are “LLM-friendly” and can have their insights correctly extracted.

Cunningham points to the fact that accelerated research productivity (driven both by more-productive existing researchers but also newly activated researchers who wouldn’t have published anything before AI) will overwhelm the existing peer review process because human peer review is the new bottleneck. (The old bottleneck was production of useful research.) As a result, review queues will lengthen and manuscripts per reviewer will also increase.

Kim suggests splitting the publication pipeline into separate components for (1) automated verification and dissemination of verified claims at scale (what he calls “atomic empirical claims”) and (2) “complex research” and research requiring proprietary data (reserved for the traditional academic journal system). Oks echoes this by saying, “the most efficient unit of scientific contribution might [become] a living document, perhaps even just a GitHub repo.”

Cochrane identifies several issues with the status quo. For starters, the process takes forever and authors cannot submit “in parallel”; they have to submit sequentially to different journals. Most notably, at the heart of problems with peer review is that academic journals really serve three simultaneous purposes but are really only well-suited for one of the three. Those three purposes are: (1) communication; (2) credentialing; and (3) archiving (the only purpose for which the journal was designed). Pre-prints (like the unpublished NBER working paper series) have overtaken the role of communication, and universities are the stakeholders that require credentialing (for tenure and promotion decisions); journals are not designed to help with that.

Given the potential disruption of agentic AI coding tools like Anthropic’s Claude Code or OpenAI’s Codex (or, if you buy into the hype, autonomous agentic AI scientists), now seems like a good time to review the existing issues with peer review, as well as the new ones on the horizon, in hopes that we can redesign the system now before the “storm” hits.

What do I propose?

My proposal focuses on four reforms, though each of them is extremely unlikely to be implemented. But we’re here to talk ideas, so let’s at least think outside the box!

1. Set up a parallel verification organization that does what I call “automated and distributed peer review” (use AI for a lot of the verification but have many humans answer brief surveys about sections of the manuscript, and only do this once)

2. Allow manuscripts to be simultaneously submitted to multiple journals

3. Dispose of traditional reviewers and build out journal editing teams (i.e. move traditional referees into associate editor roles and have AI serve as a first-pass reviewer, like Cochrane mentions here in his review of refine.ink)

4. Reform what counts as “certification” for tenure

To help provide more context for these proposals, I also wanted to cover what constitutes peer review right now.

How does peer review work right now?

This is largely based on my having served as a reviewer approximately 175 times in my career so far. I am also currently a co-editor at the journal Economics of Education Review, though I’m only a few months into that role. This process is largely similar across different scientific fields, though the details will vary (for example, I understand that submission fees are much higher in some fields compared to others).

Authors

Here’s the rough process for getting an article published in an economics journal, from the author’s perspective:

produce a manuscript

submit the manuscript to a journal, possibly after paying a submission fee of $50-$200

the journal editor selects “referees” (peer reviewers), who are anonymous to the author, to provide comments on whether the paper is publishable and what edits would be required to make it so

wait for several months (2 months if you’re lucky; 9-12 months if you’re not)

get a decision letter from the journal’s editor, accompanied by 2-4 “referee reports”

if rejected, make edits to the manuscript and repeat steps (2)-(5) at a different journal; possibly with some overlap in the referees

if invited to revise, edit the manuscript and produce a lengthy “response document” that includes details as to how you modified the manuscript to incorporate each reviewer’s suggestions

resubmit the revised manuscript

wait for several more months

hopefully the paper is now accepted, but you may have to repeat steps (7)-(9) one or two more times before the paper is finally accepted

if the paper is rejected after your revisions, you are out of luck and need to go back to the (2)-(5) loop

if the paper is accepted, go through a post-acceptance process to work with journal copyeditors to make the content conform to the journal’s standards

wait for a while (2 weeks to 3 years) until it finally gets published (yes, that’s right: some accepted articles are listed as “forthcoming” for several years before officially being published)

As you can probably tell, this entire process ends up taking years, and that’s before taking into account the backlogs of step 13.

Reviewers

What does the process look like for a reviewer?

randomly receive an email from an editor to review a particular manuscript

decide whether to review it

spend several hours reading the paper and drafting a list of suggestions for improvement, as well as a letter to the editor summarizing your recommendation

I reckon that I spend about 50-60 hours per year on refereeing. That shakes out to about 3%-4% of my total work hours. It doesn’t sound like much, but it feels like more than that. Probably because these hours are “on the margin” of leisure and only very rarely am I financially compensated for my time spent reviewing.

Editors

I’ve only just started my editor role, but the process looks like this:

receive an email from the editor-in-chief asking me to “handle” a manuscript (i.e. take the lead on conducting its peer review process)

decide whether the manuscript is close enough to publishable that it merits sending out to reviewers (failure to meet this standard is known as a “desk reject,” meaning the manuscript gets returned to the author(s) with no referee or editor feedback).

if the manuscript gets past the desk, send emails to referees who may be qualified to review the article

most of the time, at least one referee says no, so it’s important to come up with a long list of potential referees at the outset so that you can work your way down the list until at least two of them agree

send reminders to the referees 4-6 weeks later unless they’ve turned their report in already

once all referees have turned in their reports, review the paper alongside the referee reports, draft a decision letter to the authors, and send the letter and the reports back to the author

if the decision is “revise and resubmit” then repeat (3)-(6) after the manuscript has been resubmitted (99% of the time the same set of referees will review the revised manuscript, so this is mostly just steps 3 and 6)

repeat (7) until the paper is either rejected or accepted

Issues with the system

Why use this silly system?

To an outsider, this system looks backwards and ridiculous. But Cochrane explains the utility of this system: it’s to make sure that whatever is proverbially etched in stone at the journal’s archive is as pristine and polished as possible. This is the “archive” function that journals serve. It’s also why the journals can be used as certification instruments and why they don’t serve the communication function very well. As Cochrane writes about one of his papers, post-peer-review:

[T]his paper is perfected. The comments of nine very sharp reviewers and three thoughtful editors have improved it substantially, along with at dozens of drafts. Papers are a conversation, and it does take a village. The paper also benefitted from extensive comments at workshops, and several long email conversations with colleagues.

(Original emphasis.) Why were there nine reviewers and three editors? Because he sent the paper to multiple journals, and each journal had a new crop of reviewers.

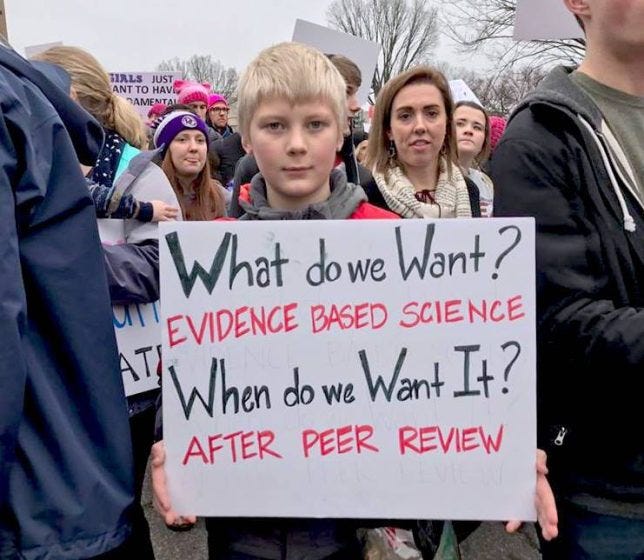

This reminds me of the following clever and scathing indictment of everything good and bad about science.

What is wrong with the system?

Aside from how long it takes, there are several drawbacks:

There is a lot of wasted time because submissions must happen serially instead of in parallel.

A side effect of this is that referee reports are often not shared between journals (though this is frequently done if you sequentially submit to multiple journals in the same “brand,” like the nine journals managed by the American Economic Association).

As a result, downstream editors usually do not have access to upstream referee reports.

Another result of this is that feedback tends to have a “diminishing marginal product,” meaning that the 8th and 9th referees to see a paper will most likely make the same suggestions as the 3rd and 4th referees and hence add very little value to the final quality of the publication.

Another side effect is that some referees may be tabbed multiple times to review the same manuscript, so there aren’t any new “perspectives” being added during additional rounds of review. This is probably a good thing because it limits the total time spent on the manuscript. (This has happened to me as a referee four times in the past year … for one paper I was selected as a referee at the first, second, and third journals the manuscript was sent to. It was fairly easy to do these downstream referee reports, but it still took a non-trivial amount of time for me to verify which changes were made to the manuscript and then produce a new set of suggestions.)

All correspondence about the article is hidden from public view, so a reader won’t know what the referees suggested, what the editor decided, how the manuscript was modified, who the referees even were, how many referees there were, or any other details.

Because of the above time lags, no one really reads the final published manuscript. It’s primarily a reference for future citation. (I’m exaggerating a bit, but not too much: I conjecture that the greatest number of readers for a given paper come when it is at the pre-print stage.)

Universities rely on journals for certification to inform them about tenure and promotion decisions. But the editor and the referees don’t/shouldn’t care about the authors’ tenure cases.

There are plenty of other negatives about the current system:

the fact that editors can hand-pick reviewers and hence decide which “peers” review the paper (this is most concerning for politically charged topics where personal preferences may outweigh truth-seeking … these types of conflicts of interest are never reported, in my experience)

authors’ incentives to selectively or strategically cite certain papers in hopes that the cited authors will be asked to serve as reviewers (again, concerning for politically charged topics where there are clear “tribes” independent of actual truth-seeking)

journals charge subscription fees to libraries and other organizations but do not pay the authors or reviewers (or, sometimes, even the editors) for their time and effort; journals employ a nominal copyediting team to make the final paper look professional, so the subscription fee is actually payment for the credentialling signal

As an example of this, I could set up my own journal if I wanted to: The Ransom Journal of Economics. But no one would submit to it because it’s completely new and uncredentialed, so it wouldn’t “count” for anything.

etc. (see Stuart Ritchie’s excellent book Science Fictions for a more complete list)

How will AI-accelerated research disrupt the current system?

There are at least three ways that AI will disrupt the current system, though I’m sure there are others out there that I haven’t thought about or paid attention to.

Reviewer Time

This is what the authors I initially referenced have been writing about. If every researcher can produce papers at even a 10% faster rate (an extremely conservative estimate), then journal submissions will noticeably increase. Even if journals expand their publication slots, the reviewer pool is still fixed, so the stock-flow identity that Cunningham laid out in his post implies that all authors will have to wait even longer for their editorial decisions. Moreover, as Cunningham points out in his post (and as the Cochrane post explains), this has been an issue long before the agentic AI era. Cunningham’s bigger fear is that there is a large swath of people with PhDs who never publish in academic journals, but who will now be able to. So it’s not only an acceleration at the intensive margin, but at the extensive margin as well. I am not sure about the risk of this, given that most non-academic PhDs don’t have any incentive to publish. But it is a non-zero risk.

Reliance on LLMs to assist with reading papers

The other issue that Goldsmith-Pinkham has written about is that LLMs are actually bad at reading papers. Not that they can’t read the text, but that the PDF format that papers come in is not designed for machine consumption. It strips out structure and context in a way that exacerbates LLMs’ tendency to overgeneralize. So the LLM will lose critical context and nuance about the paper’s findings. (And, as Goldsmith-Pinkham writes, this is also sometimes the case for human readers!) Thus, researchers who rely on LLMs to summarize papers for them will come up with potentially overreaching or incorrect conclusions, which will then propagate through the research enterprise.

Widening variance in quality

Kim emphasizes that AI-accelerated research will amplify the variance of research quality, “from outright hollow slop to novel new research that expands far past what was previously ever feasible.” This will put more of a burden on editors to be even more decisive than they have (or haven’t) been (depending on who you ask).

What can we do to overhaul peer review?

The problems above are extremely difficult to solve, and AI innovation is progressing exponentially faster than sclerotic university administrators or dusty academic journals can keep up with. Here are some changes I’ve been thinking about.

1. Set up a parallel verification organization with distributed peer review

The number one change is to reform peer review so that verification of facts and assessment of contribution takes place outside the journal ecosystem. We already do this to a certain extent with pre-registration of RCT’s: the American Economic Association has an RCT Registry where authors can pre-register their experimental designs. Any journal that might publish the results of an RCT can send its editors and reviewers to the RCT Registry where they can check that the manuscript under consideration has complied with its pre-registered design. It also works well for post-publication scrutiny (see this Data Colada post).

So why can’t we do something similar for peer review? Set up a similar parallel organization, call it The Verification Org (TVO). Journals require all manuscripts to have gone through TVO before reviewing their submission. If it’s an empirical paper, then authors are required to submit code and data (if accessible) alongside the paper. (This moves in the direction of Oks’ vision.) TVO then automatically fact-checks the paper and generates a certification report. This report is submitted to academic journals alongside the manuscript. Journals can choose what to do with this report, but in my ideal case, they require its furnishing and use it as substitute for a diligent-but-less-knowledgeable referee. Not too different than what they do now for the RCT registry. It’s an important but low hurdle to clear.

The other role of TVO is to distribute peer review. And this is mainly to guard against overgeneralized claims or absence of key citations (getting to the issues raised by Goldsmith-Pinkham). But it could also be used to assess a paper’s level of contribution. If a paper is about Topic X, but doesn’t cite seminal research on Topic X, then that’s a problem. Citations are probably the trickiest part of science and peer-review: they are often employed selectively for strategic rather than truth-seeking reasons. To get a handle on this, I envision TVO sending out very brief surveys to 20 or so authors per paper. The authors are knowledgeable, either because they are cited in the references, or because they should have been cited in the references. These surveys would ideally take less than 20 minutes each. They would present authors with the title and abstract and then solicit feedback on specific passages in the paper that the reviewers have expertise in. e.g. “Does the cited claim in the last sentence of paragraph 3 on p. 5 hold up?” Then the results of these surveys are posted as part of TVO’s certification report, including who was invited to participate and who ended up participating. Authors can then update their submission to TVO based on the feedback of the automated/distributed reviews, and this can be logged in a git-like version history. We can get creative with the details, but something like a Rotten Tomatoes score (for both veracity of claims and novelty of contribution) seems viable.

2. Allow simultaneous submission to journals

Cochrane advocated for this all the way back in 2017, but I’ve never heard it seriously entertained by anyone else. He points out that the current system of sequential submission benefits the top journals the most. It also significantly slows down the process and results in the wastage I outlined above. This wastage will get worse under Cunningham’s prediction.

Perhaps one reason simultaneous submission has never gained traction is that it would waste referee time due to duplicate reviews. But those duplicate reviews are happening anyway under the current system, just serially instead of in parallel. Centralizing verification through TVO would resolve this tension. The other benefit is it fosters competition between journals, which will put pressure on top journals to expand if there are more papers produced that are of sufficiently high quality. As Cochrane says, “We advocate competition elsewhere. Why not in our own profession?”

3. Dispose of traditional reviewers and build out journal editing teams

The main problem of oversubscribed peer review can be solved by a first pass of automated and distributed peer review (this is not a new idea). This leaves the journals free to curate and not have to certify. And in order to do this, journals will need to draft much larger editing teams (this is a new idea).

Currently, most journals have one editor-in-chief and several co-editors who work as final decisionmakers. Some journals also employ associate editors, either to work under co-editors to help find reviewers, or to work as “super reliable referees” who can be called upon on short notice.

In the new regime, I view journals as building out many more co-editors and many, many more associate editors. The associate editors can work under the co-editors and serve a similar role as (good and reliable) referees in the current regime: go through the manuscript with a fine-toothed comb and make sure everything checks out. Require revisions by the authors as you see fit. The 20-some-odd referee reports I do for journals each year could instead be handled as an associate editor at journals that most closely match my expertise.

In the new system of parallel submissions, other associate editors could be “recruiters” to identify good-looking papers that would fit with the journal’s tastes and goals. This is the beauty of simultaneous submission: it flips the competition onto editors and destroys journal market power.

4. Reform what counts as “certification” for tenure

The remaining factor that needs to change for all of this to work is the demand side of the journal prestige equation. Universities want to employ the best researchers they can. How do they know who is a good researcher? In the current system, they rely on journal quality and the reference letters of peer researchers to signal this. But in the new world I’m envisioning, there needs to be other ways to measure researcher quality. This is the primary limitation Cunningham identified in his post entitled “Research and Publishing Are Now Two Different Things.”

This is by far the most difficult step because it requires universities to change. If you’re on board with me regarding centralized and distributed verification and reforming journals, the remaining question is, “So what counts as ‘research’ then?” The current model rewards publication quality and quantity, and, as Oks wrote, citations and “impact.” But in this new world, everyone can “publish” more articles. Do we keep the journal system for its archiving function, or do we shift to a system of arXiv-like open-source papers and just let the market decide which ideas are worth citing and pursuing? The Google paper “Attention is All You Need” got accepted into the prestigious NeurIPS Conference in 2017. Now it has over 239,000 citations. But it more or less exists as a pre-print with some public referee reports. Yes, this counts as a publication, but it doesn’t look like a traditional economics publication. Perhaps economics can be more flexible about what “counts” as tenure.

Why nothing will actually change

By now you have probably come to the conclusion that the reason nothing has changed since 2017 is because this sort of transition requires a lot of coordination from a lot of different stakeholders, many with opposing preferences. As Cochrane wrote, top journals enjoy their market power. And my idea of centralizing verification and contribution is unlikely to get off the ground without a lot of buy-in from existing journals, editors, referees, and researchers. Universities would also need to coordinate with each other, because they compete with each other for workers. And universities are not exactly known for being agile.

So the most likely outcome is that nothing changes at all. Or, at best, we get something sort of like the AEA RCT Registry. Absent broader change, the system will eventually break under its own weight and then what will happen? Will enough people in positions to act (journal editors, professional associations, university administrators) decide that a coordinated redesign is preferable to a broken mess? I’m not optimistic, but I’d love to be wrong.

It seems increasingly clear that parts of the scientific system need to change. At the same time, AI may give younger scientists new ways to navigate it more intelligently.

But I also worry that large-scale use of LLMs could push scientific visibility even further toward prestige and narrative, and away from merit and reality.

I wrote a short essay (on my substack) on reproducibility, prestige, and preclinical research, partly from first-hand experience.

Maybe this will finally force "science" to overhaul the process. I think it's been broken for quite a while, even if I couldn't tell you exactly when.